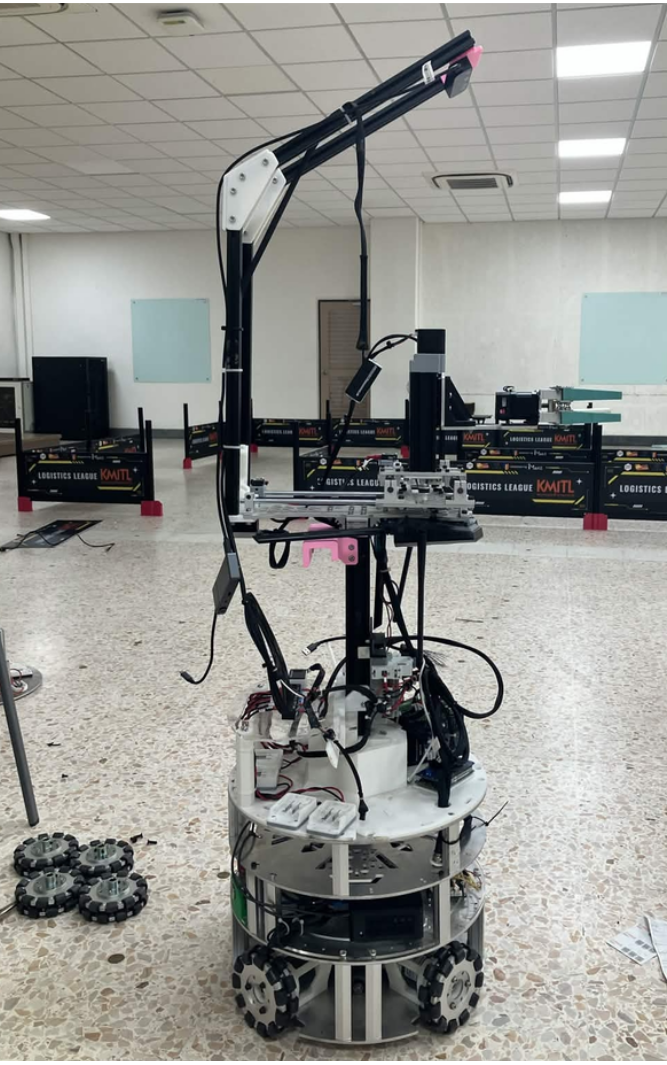

System Overview

Developed a comprehensive autonomous system integrating a 3-axis Cartesian gantry onto an omnidirectional mobile base. The mobile base utilizes 2D LiDAR geometry and RANSAC-based line detection to perceive its environment, enabling a phased docking controller with safety mechanisms like anti-windup and deadband filtering for reliable auto-parking. The mounted 3-axis gantry operates via an open-loop stepper motor system with an FSM, using a RealSense 3D camera for visual perception. By combining YOLO object segmentation with depth filtering (median and 30th percentile) and utilizing an Affine matrix for precise Hand-Eye calibration, the robot effectively identifies, locates, and grasps objects autonomously.

Tech Stack

3D Vision Grasping

Integrated YOLO & RealSense for accurate object detection and depth estimation.

LiDAR Auto-Docking

RANSAC-based line detection and phased control for precision parking.

Full System Integration

Unified ROS 2 state machine coordinating mobile navigation and gantry kinematics.

Project Demo

Engineering Features

Built a 3-axis Cartesian gantry with open-loop control, FSM, and deterministic waypoint generation.

Integrated YOLO segmentation with RealSense depth data (median, 30th percentile filtering) for precise 3D back-projection.

Implemented an Affine matrix-based Hand-Eye calibration to translate camera coordinates to the gantry seamlessly.

Developed LiDAR-based perception using geometric filtering, range gating, and RANSAC-based station face detection.

Engineered a phased docking controller with safety mechanisms (anti-windup, deadband filtering, bailout recovery) for the mobile base.

Project Resources

Want to see more work?

I have more projects across robotics, embedded systems, and software engineering.

View All Projects